Once you start looking at how AI systems are actually built, there is a very natural conclusion people tend to reach, and to be fair, it sounds perfectly reasonable at first.

If NAND is too slow for certain parts of the workload, and even advanced flash architectures still introduce enough delay to matter, then the obvious answer would seem to be adding more DRAM. After all, DRAM has always been the fast layer. It is where active data lives, it responds quickly, and for decades it has been the part of the system you lean on when you do not want the processor sitting idle waiting for something to arrive.

So the assumption is easy to make: if speed is the problem, then expand the fastest thing you have.

That logic holds together nicely until AI enters the picture and starts pushing DRAM into a role it was never really designed to fill. The problem is not that DRAM has suddenly become slow, or obsolete, or somehow less useful than it was before. The problem is that AI workloads are asking it to do far more than simply act as a fast working layer between compute and storage.

For the broader framework behind this shift, this article ties directly back to the main pillar piece here: NAND isn’t going away, but AI servers now depend on more than flash.

DRAM Was Built for Speed, Not for Carrying the Entire System

The first thing to understand is that DRAM has always been optimized around speed and responsiveness, not around holding enormous amounts of data at scale. In traditional computing, that distinction was rarely a problem because most workloads had a fairly clean separation between active data and stored data. The system kept what it needed immediately in memory, pulled the rest from storage when necessary, and the handoff was usually good enough that nobody thought much about it.

AI changes that balance rather dramatically. Instead of working through modest chunks of active data and moving on, AI models tend to revisit large datasets repeatedly, move information in parallel, and keep a much bigger portion of the working set within reach of the compute layer for much longer periods of time. That means DRAM is no longer being asked to simply hold the current task. It is being asked to help hold an enormous and constantly shifting body of data that the system wants nearby at all times.

That is a very different job.

It is also why technologies above and around DRAMA type of fast, volatile memory used to store active data for quick access by the processor. have become more important. In the earlier article on High Bandwidth Memory and why AI depends on it, the focus was on moving a smaller amount of critical data extremely close to the processor so the GPU stays fed. That article makes the point that proximity matters, but it also quietly reveals the next problem, because once the working set grows beyond that immediate layer, the system still has to decide where everything else should live.

The First Wall Is Cost, and It Shows Up Fast

One of the reasons people like the idea of “just add more DRAM” is because it sounds clean and direct. In practice, it becomes expensive very quickly. DRAM is simply not priced like NAND, and once you start scaling systems into AI territory, you are no longer talking about adding a little extra memory to a server. You are talking about hundreds of gigabytes, sometimes far more, across many nodes, racks, and clusters.

At that point, DRAM stops feeling like a performance upgrade and starts looking like an infrastructure burden. The cost curve does not rise gently. It climbs fast enough that the idea of using DRAM to solve every data locality problem begins to break apart under its own economics.

This is one of the reasons the memory stack is getting deeper rather than simpler. The industry is not moving away from DRAM because it stopped being valuable. It is moving away from the assumption that DRAM alone can be the answer to every latency-sensitive problem at AI scale.

The Second Wall Is Power, and That Problem Never Sleeps

Even if cost were easier to justify, DRAM still runs into another issue that becomes impossible to ignore once systems get large enough, and that is power. DRAM must be constantly powered to maintain its state. That is just part of the technology. So the more you add, the more energy the system consumes simply to keep data sitting there ready.

In smaller environments, that overhead may feel acceptable. In dense AI systems running continuously, it starts to become a major operational issue. More DRAM means more power draw, more heat, more cooling, and more design pressure on the entire platform. Suddenly the decision is not just about memory capacity. It is about thermal limits, data center efficiency, and whether the supporting infrastructure can absorb the cost of keeping that much active memory alive around the clock.

This is also where the role of intermediary layers starts to make more sense. In the previous installment on storage class memory between DRAM and NAND, the idea was not to replace DRAM, but to relieve some of the pressure on it by introducing a layer that keeps more data closer to compute without forcing everything into the most expensive and power-hungry tier.

Then There Is the Physical Reality of Proximity

There is another reason DRAM does not scale infinitely well in AI systems, and it has less to do with budget and more to do with physics. DRAM delivers value partly because it sits relatively close to the processor. The closer memory is to compute, the lower the latency tends to be and the more responsive the overall system feels. But proximity is not something you can expand forever without consequences.

There are physical limits to how much memory can be placed near a CPU or GPU before layout complexity, trace length, signal integrity, and packaging constraints begin to work against you. This is exactly why advanced memory packaging showed up in the first place. HBM exists because traditional DRAM placement can only go so far, and once the compute side becomes fast enough, those distances and pathways begin to matter more than they used to.

But HBM is not a full-capacity answer either. It offers incredible bandwidth, but not unlimited volume. So the system ends up living in a constant balancing act between what can be placed very close and what has to sit farther away. AI workloads stretch that balancing act much harder than conventional systems ever did.

AI Makes Small Delays Expensive

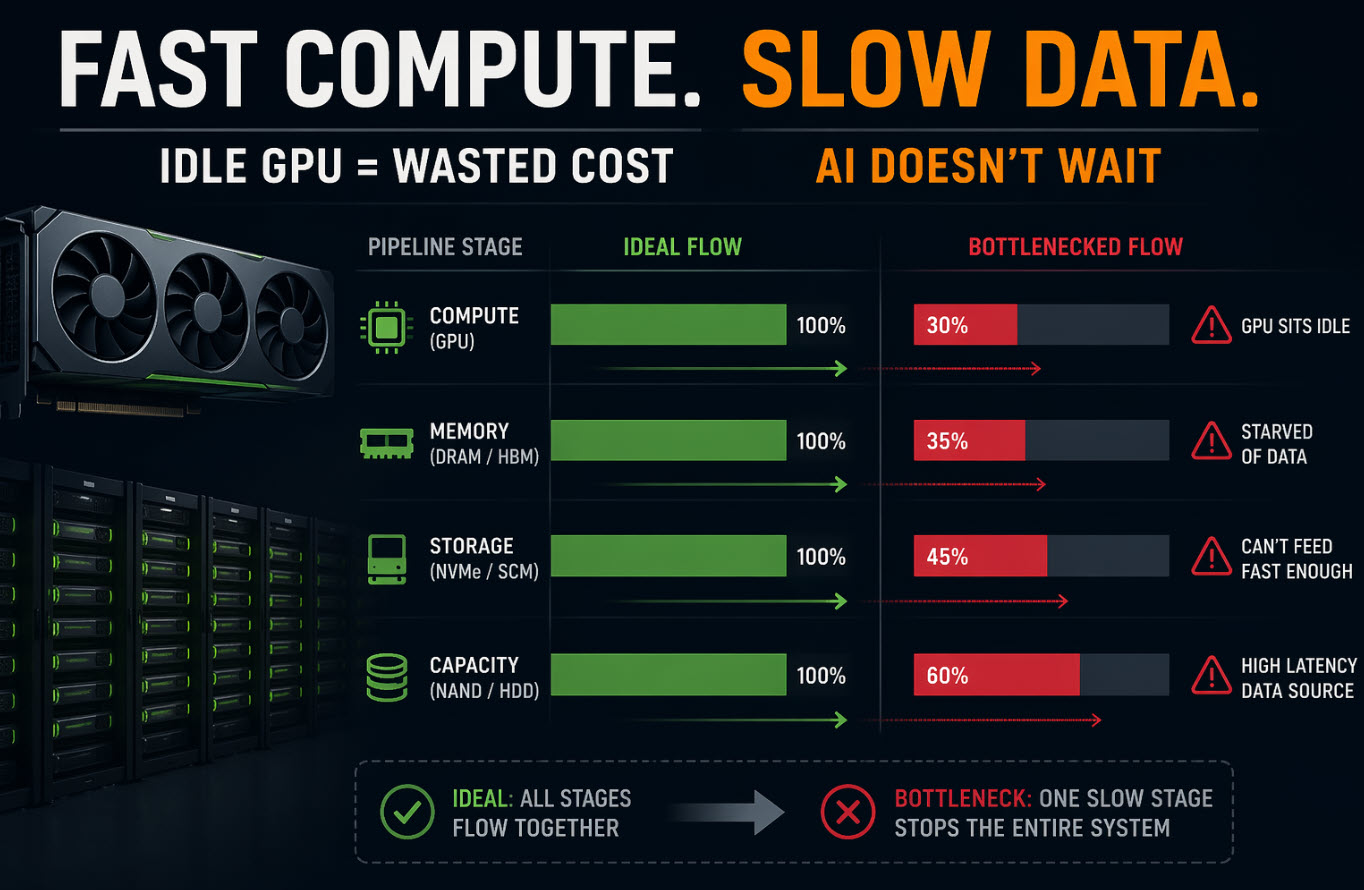

One of the more interesting things about AI infrastructure is that it exposes inefficiencies that older workloads could mostly hide. In a more traditional system, a slight delay in data access might not amount to much. The processor waits a bit, the task finishes a little later, and the user never notices. AI systems are far less forgiving because they operate with so much parallelism and so much money tied up in the compute layer.

If a GPU is not getting data when it needs it, that is not just a technical nuisance. It is expensive idle time. Multiply that across many accelerators running in parallel and even very small delays start to show up as real losses in utilization.

That changes the objective. The goal is not simply to have fast memory. The goal is to maintain consistent data delivery at a scale large enough to keep the most expensive parts of the system busy all the time. That is a much harder requirement, and it is exactly why DRAM alone starts to look insufficient once AI infrastructure grows beyond a certain point.

The Warehouse Analogy Still Works – It Just Gets Bigger

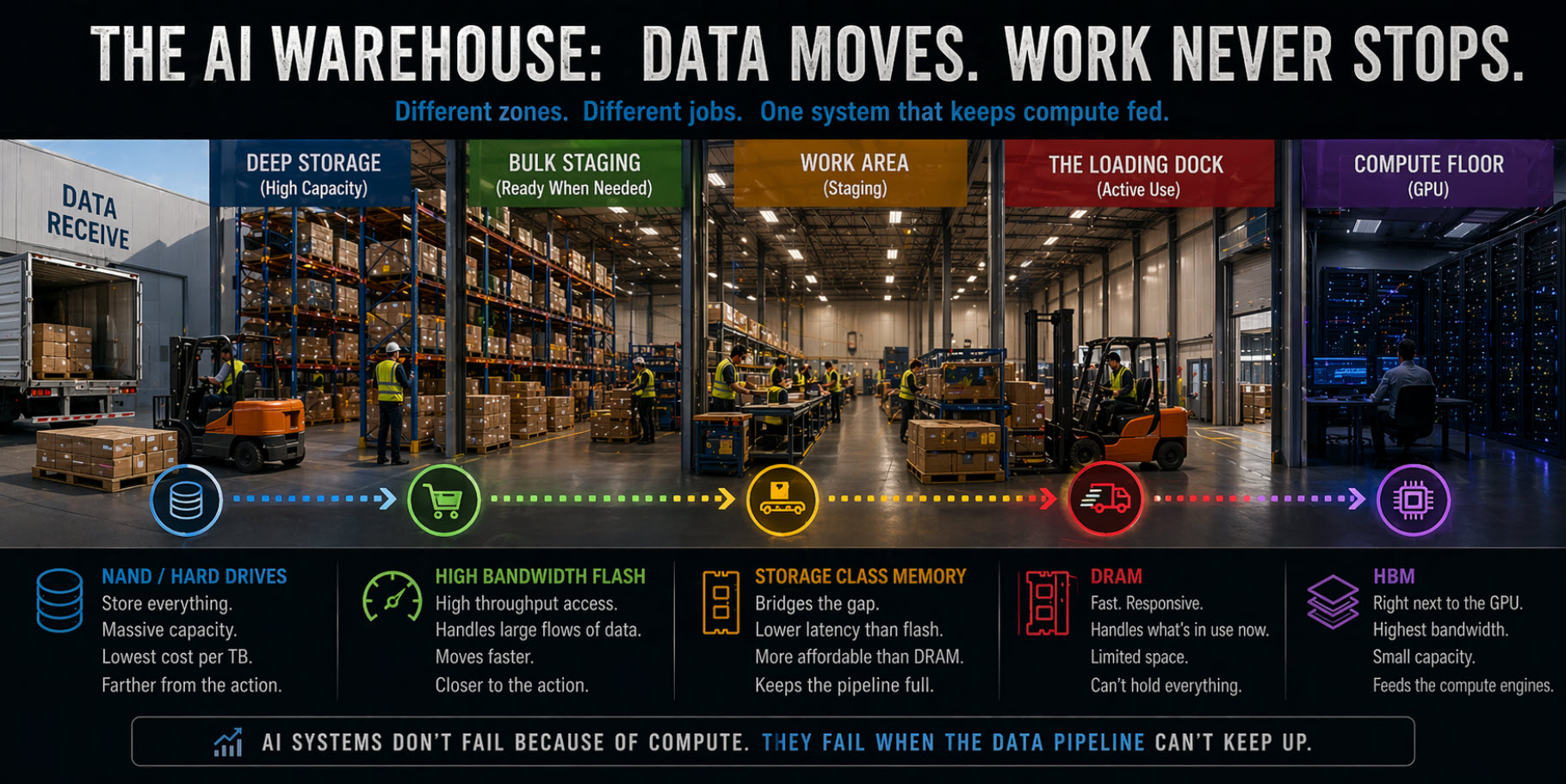

If we keep using the same warehouse analogy from the earlier articles, DRAM is still the loading dock. It is where active work happens, where items are opened, sorted, and moved into immediate use. For years, that model worked well because the amount of activity at the dock was manageable and the system did not demand that everything be staged there at once.

AI changes the scale of the operation. Now the dock is expected to support a near-constant flood of material, with far more activity happening in parallel and far less tolerance for delay. At some point, even the best loading dock cannot simply keep expanding. There is only so much room, only so many parallel movements that can happen efficiently, and only so much inventory you can keep directly at the point of use before the layout itself becomes part of the problem.

So the answer is not to make the dock infinitely larger. The answer is to redesign the workflow around it.

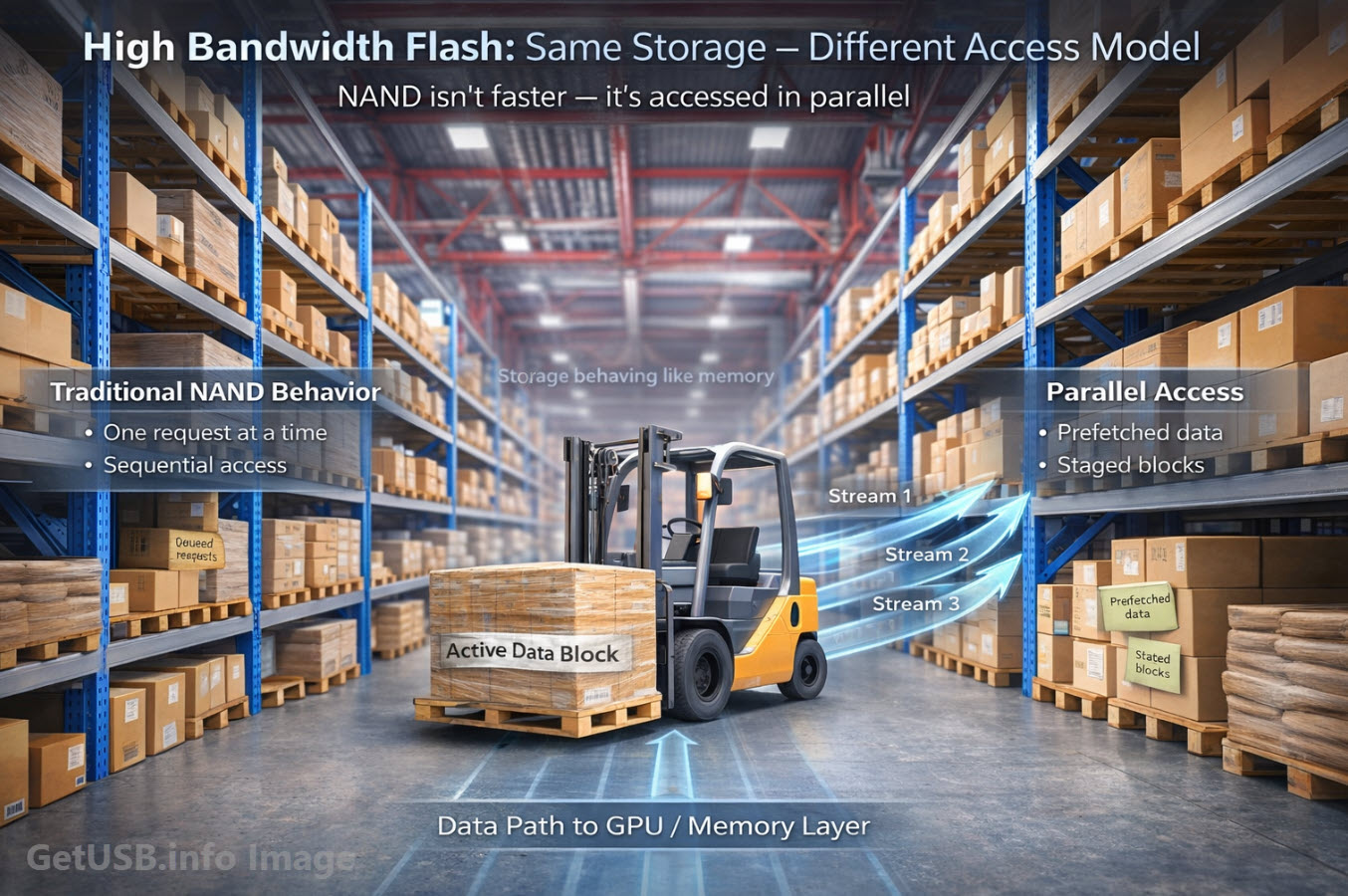

That is where the rest of the memory hierarchy starts to earn its place. HBM keeps the most time-sensitive data right next to the processor. Storage class memory helps smooth out the transition between active memory and slower storage. And in the more recent article on how high bandwidth flash pushes NAND closer to memory-like behavior, the focus shifted to how the storage side is also being redesigned so it can participate more intelligently in feeding the system.

None of those layers exist because DRAM failed. They exist because AI outgrew the idea that a single fast layer could carry the whole workload by itself.

What This Really Means for the AI Memory Stack

The real takeaway here is not that DRAM is going away, because it clearly is not. DRAM remains one of the most important parts of the entire stack. What is changing is its role. Instead of being the place where everything active is supposed to live, DRAM is becoming the place where the most urgent and time-sensitive data lives, while other layers handle the growing burden of scale, cost, and capacity.

That is a subtle shift, but it is an important one. It means AI infrastructure is moving away from the older idea of a simple two-layer model – memory here, storage there – and toward something much more nuanced, where different technologies are each asked to handle the part of the workload they are best suited for.

Put simply, DRAM is still essential, but it is no longer enough by itself. AI has changed the size of the working set, the speed of the compute, the cost of delay, and the economics of keeping everything close. Once all of that changes at the same time, the memory hierarchy has to change with it.

Where This Leads Next

Once you accept that DRAM cannot stretch far enough to hold everything AI wants near compute, the next question becomes fairly obvious. Where does the rest of that data actually live, especially when the amount of information involved is far too large to justify keeping in memory?

That is where the conversation turns again, and a technology many people assume has already been pushed aside starts to matter in a surprisingly important way. Because while DRAM struggles with scale and flash still carries cost and latency trade-offs of its own, hard drives continue to offer something the rest of the stack cannot easily replace: practical capacity at massive volume.

And that is exactly why the next part of this series has to look at why hard drives are still critical for AI infrastructureThe hardware and system architecture designed to support the unique demands of artificial intelligence workloads..

About the Author

This article was developed under the direction of Greg Morris, a long-time contributor to GetUSB.info with over two decades of experience in USB technology, flash memory behavior, and data storage systems. The perspective presented here reflects hands-on industry knowledge and ongoing analysis of how real-world systems perform under evolving workloads, including AI infrastructure.

How This Article Was Created

The concepts, structure, and technical direction of this article were authored and reviewed by a human subject matter expert. AI tools were used to assist with rhythm, flow, and readability, helping organize complex ideas into a more natural narrative without altering the underlying technical accuracy or intent.

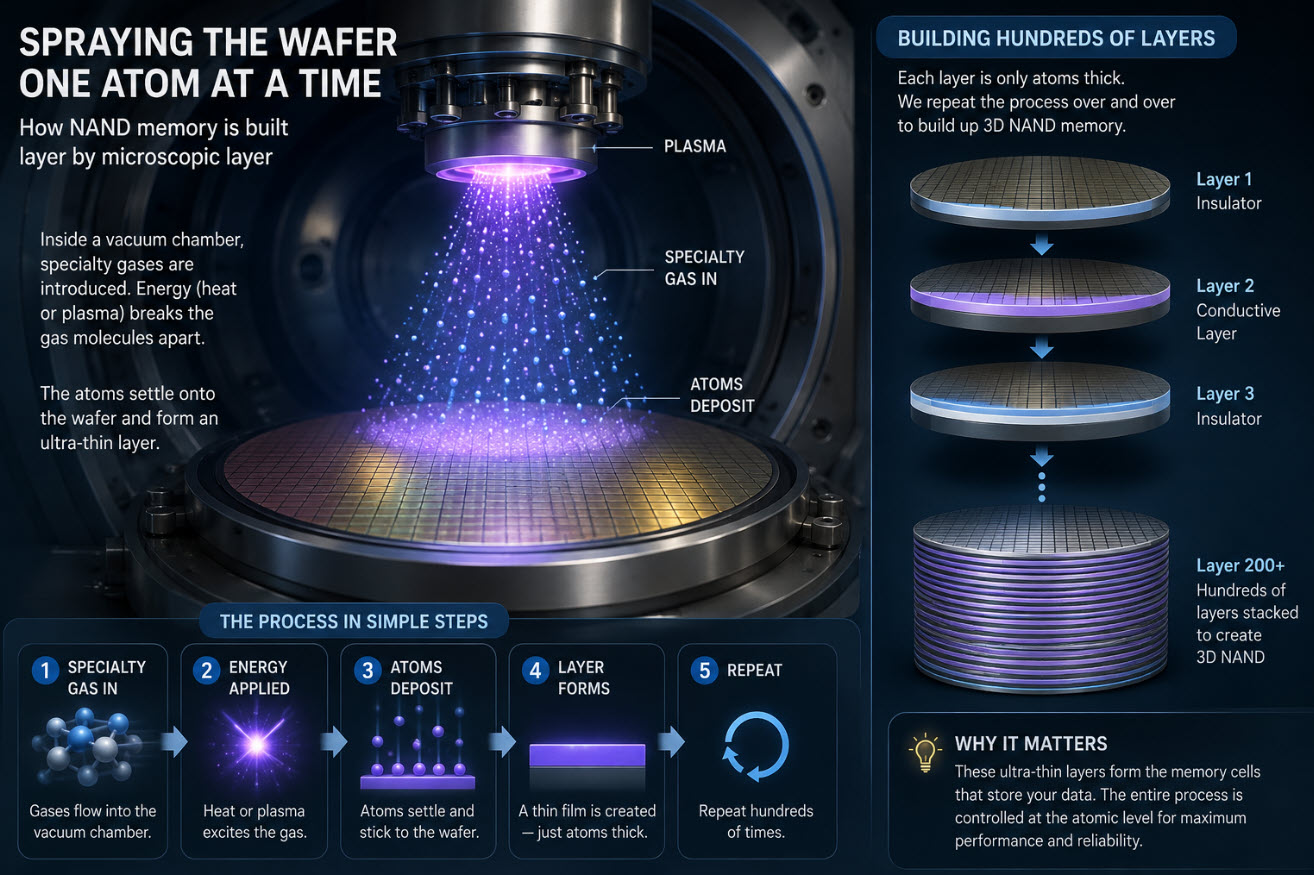

About the Visuals

The images used in this article were created specifically to illustrate concepts that are difficult to capture with traditional stock photography, such as data flow bottlenecks, memory hierarchy behavior, and system-level inefficiencies. Visuals are designed to reinforce the technical explanations and improve clarity for readers.

Let GetUSB.info keep you updated.

Receive article notifications about USB storage, flash memory, and duplication updates in your preferred language. We average a couple of articles per week.