Flash Memory Holds the World’s Data – But Not Its Own Story

If you go looking for a museum dedicated to flash memory, you’ll come up surprisingly short. There is one-tucked inside a storage facility in China, part showroom, part historical display-but it’s not something the public visits, and it’s not trying to be a permanent archive. It’s more of a curated reminder that the technology even has a past.

That’s a strange position for something that quietly holds most of the world’s data.

Flash memory sits underneath everything now—USB drives, SD cards, SSDs, embedded systems—yet there’s almost no physical record of how it evolved. No central archive. No widely recognized collection. No place where you can walk through the progression from early removable cards to the controller-driven storage systems we rely on today. For a technology this important, the absence is hard to ignore once you start looking for it. If you want to step back and understand the basics of how data actually gets stored across these devices, it’s worth reviewing how we store files on USB flash or USB hard drives before diving deeper into the architecture behind them.

And the deeper you think about it, the more uncomfortable it gets. Because this isn’t just a gap in preservation-it’s a structural problem with the technology itself. Flash memory is very good at storing data, but it turns out it’s not very good at preserving its own history.

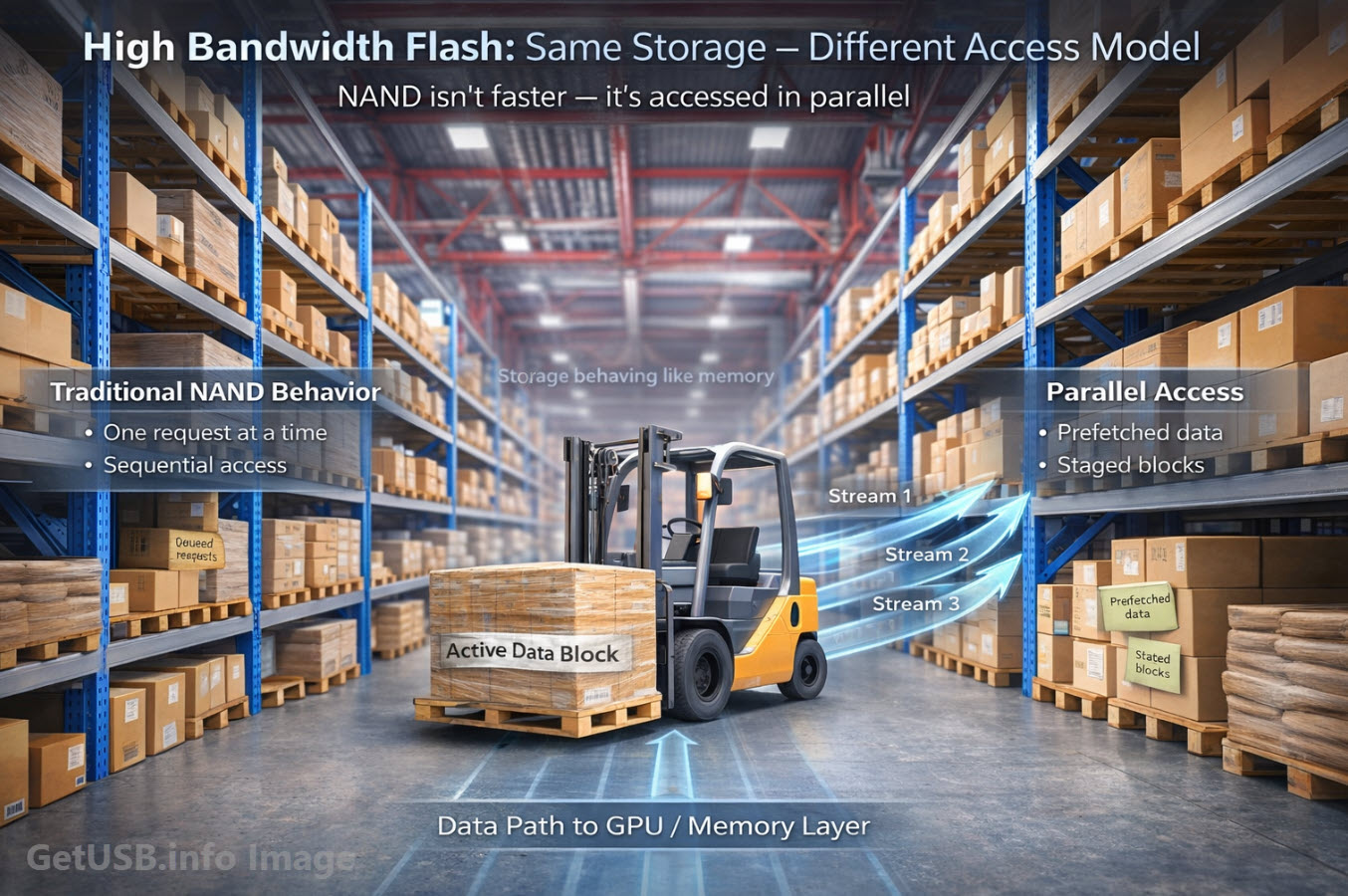

At the center of all this is NAND flash-the core technology behind nearly every modern storage device. It’s not just part of the conversation right now, it is the conversation. Supply constraints, scaling limits, controller complexity, enterprise demand-NAND is showing up in industry reports, earnings calls, and infrastructure planning in a way it never did a decade ago.

And that pressure isn’t slowing down. If anything, it’s accelerating.

The rise of artificial intelligence-particularly the shift from today’s large-scale models toward what many are calling Artificial General Intelligence (AGI)-is driving an entirely new class of data demand. AGI, in simple terms, refers to systems that can reason, learn, and adapt across a wide range of tasks at a human-like level, rather than being limited to narrow, specialized functions. Whether or not that timeline arrives soon, the direction is clear: more models, more data, more checkpoints, more storage layers feeding increasingly complex systems.

Flash memory sits right in the middle of that pipeline.

Training datasets, model weights, inference caching, edge deployment-these aren’t theoretical workloads. They’re happening now, and they all depend on fast, dense, reliable storage. NAND has become foundational not just for consumer devices, but for the infrastructure shaping the next phase of computing.

Which makes the situation even more unusual.

At the exact moment flash memory becomes one of the most critical technologies in the world, it remains one of the least preserved.

So if a real flash memory museum did exist-something more than a small corporate exhibit-what would it actually show?

A Walk Through a Flash Memory Museum

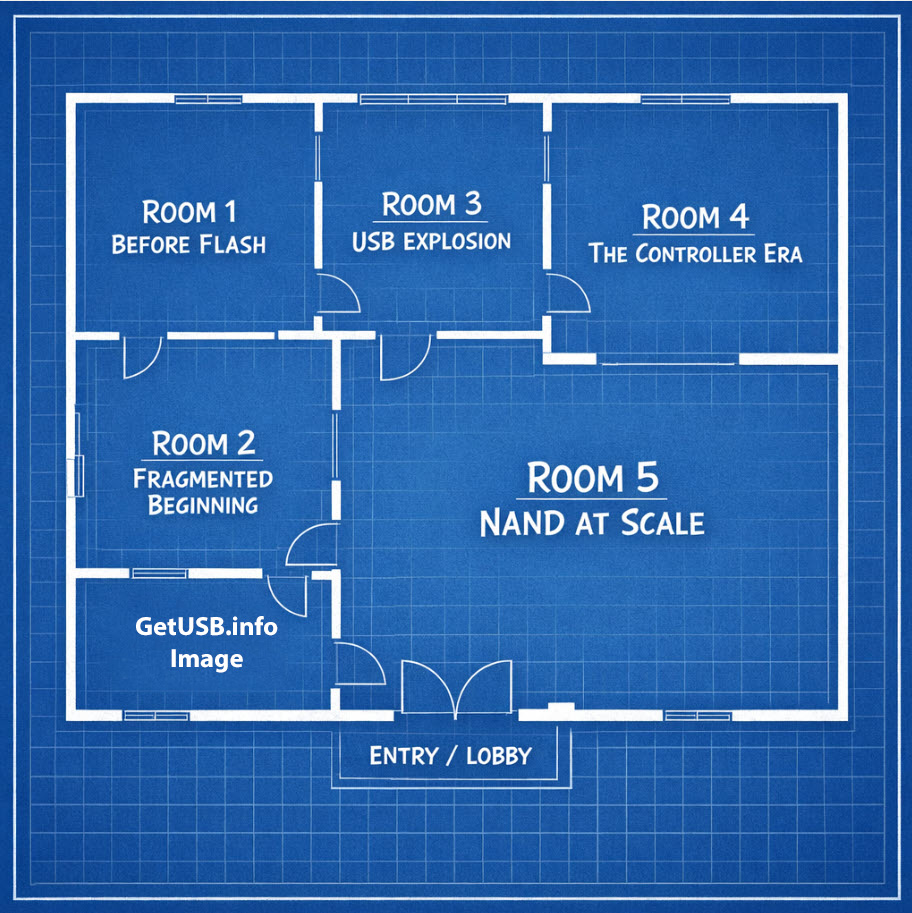

If a real flash memory museum existed, it wouldn’t feel like a timeline on a wall with dates and product launches. It would feel more like walking through the layers of how storage actually works, with each room getting larger or smaller depending on how much it truly contributes to the final device.

Not all parts of flash storage carry equal weight. Some are visible but simple. Others are completely hidden and carry most of the cost, the risk, and the engineering effort. If you laid that out physically, the proportions would tell a very different story than most people expect.

The Museum Floor Plan That Tells the Real Story

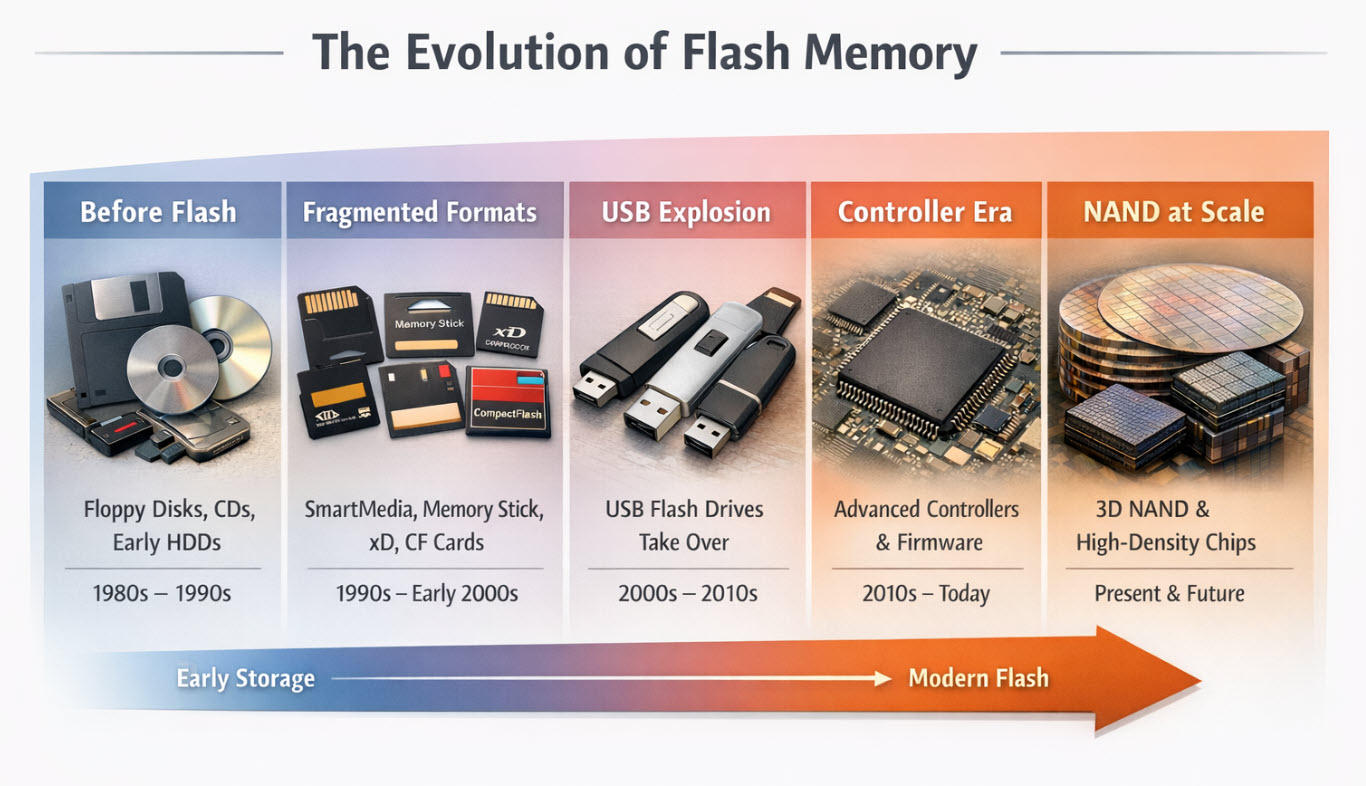

Room 1 – Before Flash (Small Room – ~5%)

You’d start in a smaller room, almost easy to overlook if you weren’t paying attention.

Floppy disks, optical media, maybe a few early hard drives. Physical storage you can pick up, look at, and understand without much explanation. Data had a place you could point to. If something failed, it usually failed in a way you could see or hear.

There’s a certain comfort in that.

This room matters because it sets the baseline. It reminds you that storage used to be tangible and, in many cases, surprisingly durable if handled correctly. But in terms of how modern flash devices are built and what they cost, this part of the story doesn’t take up much space anymore. It’s context, not contribution.

Room 2 – The Fragmented Beginning (Medium Room – ~10–15%)

The next room gets a bit more crowded, and a bit less orderly.

You start seeing SmartMedia cards, Memory Stick, xD-Picture Card, CompactFlash-formats that feel familiar if you were around long enough, but also a little disconnected from each other. Different shapes, different connectors, different assumptions about how the memory would be used.

At first glance it looks like a simple format war, but that’s not really what was happening. Underneath those form factors were real limitations tied to controller capability, NAND density, and how data could be managed reliably. Some formats hit scaling walls early. Others were too tightly controlled to gain broad adoption. A few just became too expensive to justify once better options appeared.

They didn’t disappear because people stopped liking them. They disappeared because they couldn’t keep up.

This room takes up more space because it represents a period where the industry was still figuring things out, and that process wasn’t cheap. There’s a lot of engineering buried in the formats that didn’t survive.

Room 3 – The USB Explosion (Large Room – ~20–25%)

Then you walk into a room that opens up in a noticeable way.

This is where USB flash drives take over, and everything starts to feel more unified. The shapes get simpler, the interfaces standardize, and the idea of portable storage stops being a niche use case and turns into something almost expected.

What’s interesting is that while things look simpler on the outside, this is the point where the inside starts getting more complicated. Controllers become more capable, NAND gets denser, and manufacturing scales in a way that turns flash into a commodity.

This is also where flash disappears into the background. It’s no longer the feature-it’s just there, doing its job. People stop thinking about how it works and start assuming it will always be there when they need it.

From a cost perspective, this room is substantial because it reflects the shift to mass production and global adoption. It’s where flash becomes part of everyday computing rather than something you go out of your way to buy.

Room 4 – The Controller Era (Largest Room – ~30–40%)

At some point you step into the largest room, and if you didn’t already understand flash memory, this is where things start to click.

Because this is where the real work happens.

You don’t just see chips in this room-you see the logic behind them. The controller, the firmware, the mapping between what the system thinks it’s writing and what the NAND can actually support. It’s the part of the system that most people never see, but it’s doing constant translation, correction, and decision-making in the background.

The thing to understand is that raw NAND isn’t particularly reliable on its own. Cells wear out, bits drift, blocks go bad. Left unmanaged, it wouldn’t be usable for long. The controller is what turns that unstable medium into something that behaves like stable storage.

It decides where data goes, how long it stays there, when it needs to be moved, and how errors are handled along the way. It’s also where two devices that look identical on paper can behave very differently in the real world.

This room is large because the cost is large-not just in components, but in development, validation, and long-term reliability. A lot of what makes one storage product better than another lives here, even if it never shows up on a spec sheet.

Room 5 – NAND at Scale (Massive Room – ~40–50%)

And then you enter the final room, and it’s not subtle.

This space is dominated by the physical reality of NAND itself. Wafers, stacked layers, increasingly dense cell structures that are being pushed right up against their limits. This is where most of the cost sits, and it shows.

What becomes clear in this room is that everything else exists to support what’s happening here. As NAND gets denser, it also becomes more fragile. Error rates go up. Retention becomes more challenging. The margin for error shrinks.

So the controller has to work harder. The firmware has to compensate more. The entire system becomes a balancing act between density, performance, and reliability.

This is also where the current moment comes into focus. Enterprise storage, data centers, AI workloads-all of it depends on pushing NAND further while still making it behave predictably.

And that’s getting harder, not easier.

What the Rooms Actually Tell You

If you step back and look at the layout as a whole, the proportions tell a story most people don’t expect.

The parts you interact with-the connector, the form factor, even the brand-take up relatively little space. The majority of the system lives in places you don’t see, driven by physical limits and the logic required to work around them.

And that’s exactly what makes the idea of preserving flash memory so complicated.

You can put devices behind glass. You can label formats and timelines. But the most important parts-the controller behavior, the firmware decisions, the way data is managed over time-don’t really sit still long enough to be preserved in the traditional sense.

They evolve, they get replaced, and eventually they disappear along with the hardware that depended on them.

Which makes the idea of a flash memory museum a little strange when you think about it.

Because even if you built one, the most important parts wouldn’t be the easiest to keep.

Author & Content Transparency

This article started from a simple observation raised by the author: for a technology that stores nearly all modern data, flash memory has almost no formal archive or public record of its own evolution. The concept, direction, and technical perspective come from long-term, hands-on experience working with USB storage systems, controller-level behavior, and flash memory deployment across commercial and industrial environments.

The author has been involved in the USB and flash memory space since 2004, with a front-row view of how storage devices have evolved-from early removable formats to modern controller-driven systems. Looking back, it’s not unreasonable to say that if the industry had recognized how little would be preserved, someone could have started a proper archive or museum years ago. Instead, most of that history has been left scattered, replaced, or quietly lost as each new generation of technology moved forward.

AI tools were used in the creation of this article to assist with structure, flow, and overall readability. However, all core ideas, technical insights, and conclusions were developed and reviewed by the author to ensure accuracy and relevance.

The images included in this article are not stock photography. They are visual representations created with the help of AI tools, based on the scenarios and concepts described in the content. These visuals are intended to illustrate ideas that are difficult to capture through traditional photography, particularly when dealing with internal components, historical formats, or abstract system behavior.